The proliferation of deepfakes represents one of the most pressing digital threats of our time. In 2024, deepfake detection has become a critical priority as synthetic media becomes increasingly sophisticated and accessible. The global market value for AI-generated deepfakes reached USD 79.1 million by the end of 2024, with a staggering 37.6% compound annual growth rate (CAGR) in recent years, highlighting the rapid expansion of this technology.

The statistics paint a concerning picture: in 2023, there were 95,820 deepfake videos online—a 550% increase since 2019. Even more alarming, the number of online deepfake videos doubles approximately every six months. From June to December 2020 alone, the count rose from 49,081 to 85,047, demonstrating exponential growth in fake content detection challenges.

Modern deepfakes now encompass multiple formats, including:

- Video deepfakes (manipulated facial expressions and movements)

- Audio deepfakes (synthetic voice recreation)

- Combined formats (synchronized audio-visual manipulations)

Audio deepfakes in particular have seen a troubling surge in malicious use, with deepfake fraud rising more than 10 times from 2022 to 2023, according to University of Florida researchers. This highlights the urgent need for advanced synthetic media detection techniques across all media types.

The risks posed by deepfakes extend beyond mere misinformation. They include identity fraud, reputation damage, and significant security threats. In 2024, there has been a 3,000% increase in attempts to use deepfakes to bypass identity verification systems, as reported by identity verification company Onfido. As synthetic media creation technology advances, so too must our approaches to deepfake identification and visual forgery detection.

The Science Behind Deepfake Detection

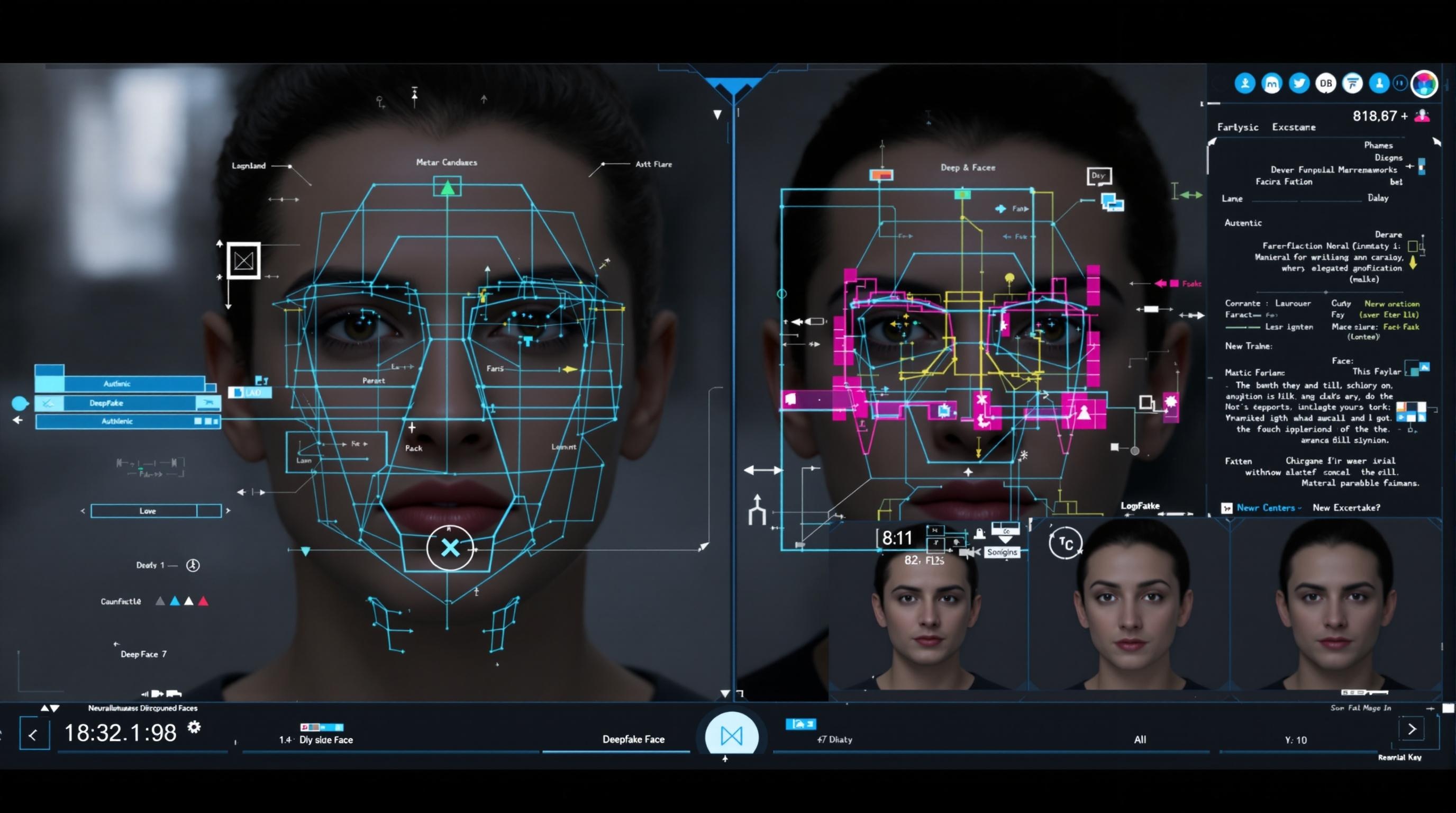

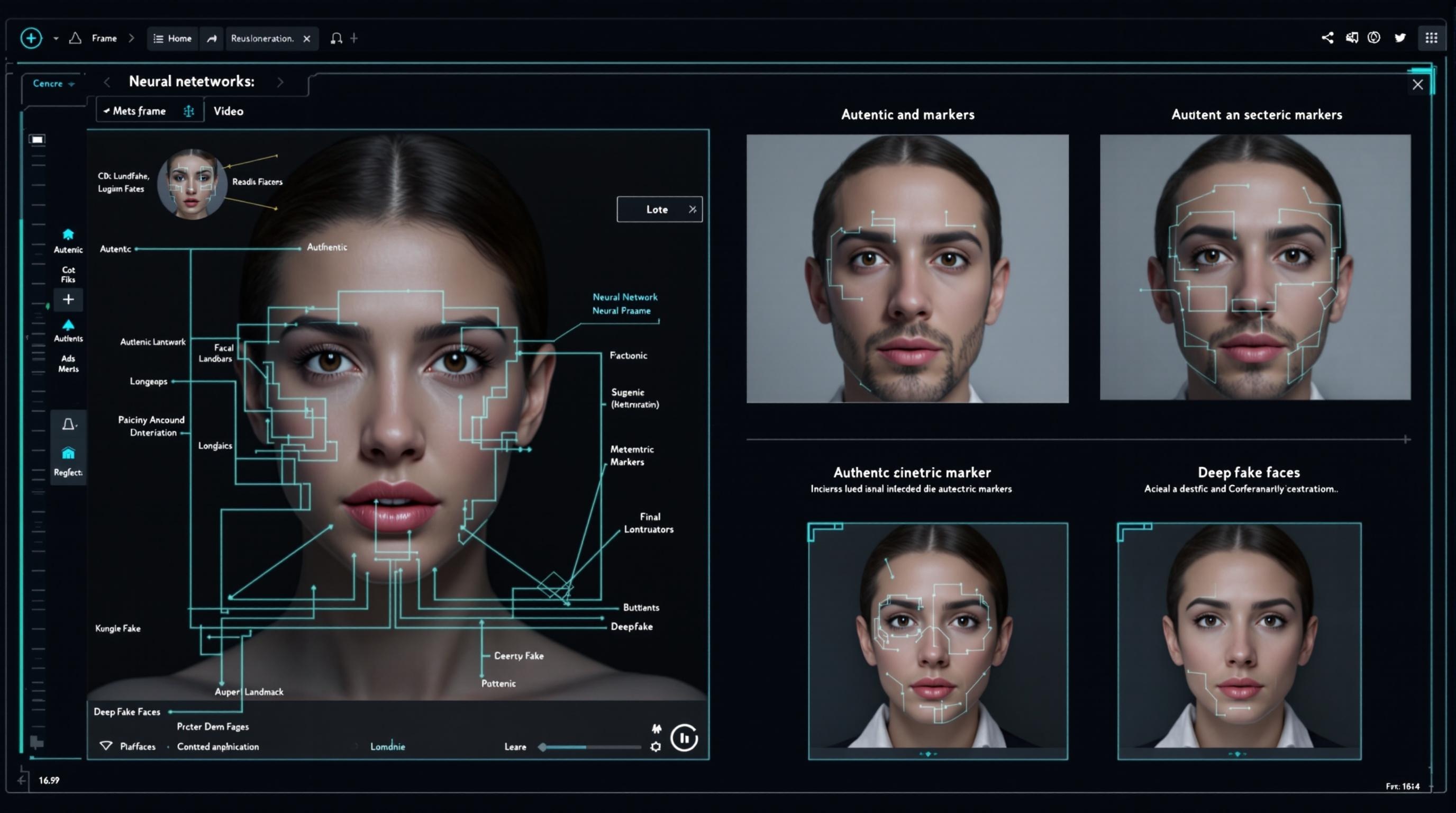

Effective deepfake detection relies on sophisticated AI-based deepfake detection methods that analyze digital media for inconsistencies that may elude human perception. At the core of these technologies are deep learning algorithms for deepfake detection, which have evolved significantly in recent years.

Modern detection systems leverage complex neural networks trained on vast datasets containing both authentic and manipulated media. These networks learn to identify subtle patterns and anomalies that distinguish genuine content from synthetic creations. Machine learning for deepfake detection has proven particularly effective at identifying these nuanced differences.

Detection methodologies typically focus on several key approaches:

- Visual analysis: Examining facial movements, expressions, and physiological inconsistencies

- Audio examination: Identifying unnatural voice patterns or audio artifacts

- Temporal coherence: Detecting inconsistencies across video frames over time

- Metadata investigation: Analyzing digital fingerprints and file properties

According to recent research, multimodal detection approaches that combine multiple analysis methods demonstrate the highest accuracy rates in deepfake forensics. These comprehensive systems analyze both visual and audio components while examining metadata for signs of manipulation, providing more robust detection capabilities than single-method approaches.

Technical Markers of Synthetic Media

Successful face manipulation detection relies on identifying specific technical artifacts and inconsistencies that frequently appear in synthetic media. These markers serve as telltale signs for visual forgery detection systems.

Facial inconsistencies represent one of the most reliable indicators of manipulation. Despite advances in deepfake technology, synthetic faces often exhibit subtle abnormalities in blinking patterns, micro-expressions, or skin texture. AI detection models are trained to identify these unnatural elements that humans might miss during casual viewing.

Audio-visual synchronization issues frequently betray deepfakes as well. Even sophisticated manipulations may struggle to maintain perfect lip synchronization throughout a video, creating microsecond discrepancies that detection algorithms can identify. These misalignments are critical markers for video manipulation detection.

Compression artifacts and quality inconsistencies also provide valuable clues. Deepfakes often display unusual compression patterns or quality disparities between manipulated and unmanipulated areas of the same frame. These technical inconsistencies serve as key indicators for image manipulation detection.

Perhaps most fascinating are the biometric inconsistencies that detection systems can identify. Advanced algorithms can detect abnormalities in physiological patterns such as:

- Irregular or absent blinking patterns

- Missing or artificial pulse signatures visible in skin coloration

- Inconsistent blood flow indicators

- Unnatural head movements and posture shifts

By analyzing these technical markers, AI-powered deepfake detection systems can achieve increasingly accurate results in identifying synthetic media across platforms.

Leading AI-Based Deepfake Detection Tools in 2024

Enterprise-Grade Solutions

For organizations requiring industrial-strength deepfake detection software, several enterprise solutions lead the market in 2024. These platforms provide comprehensive protection against sophisticated synthetic media threats.

Microsoft Video Authenticator stands as one of the most powerful tools for video authenticity verification. This system analyzes media in real-time, generating a confidence score that indicates the likelihood of manipulation. The platform excels at detecting subtle facial manipulation indicators that might otherwise go unnoticed, making it valuable for media organizations and security professionals.

Sensity’s deepfake detection platform offers specialized capabilities for monitoring and identifying synthetic media across digital channels. Their system employs advanced visual media authenticity verification methods to scan content on social platforms, websites, and private networks. With a focus on early threat detection, Sensity provides alerts about potentially fraudulent media before it can cause significant harm.

Deeptrace’s Sentinel tools provide real-time monitoring for organizations concerned about reputational risks or disinformation campaigns. Their technology specializes in video manipulation identification with particular strength in detecting political deepfakes and celebrity impersonations. The platform combines AI analysis with human verification to ensure high accuracy rates.

Truepic offers robust image and video verification technology that establishes and confirms digital media provenance. Their system creates cryptographic signatures at the point of capture, providing a verifiable chain of custody for media content. This approach to media authenticity assurance is particularly valuable for legal applications and verified journalism.

Open-Source and Research Tools

The open-source community continues to make significant contributions to deepfake detection in 2024, with several frameworks providing accessible options for researchers and developers.

FaceForensics++ remains one of the most widely used benchmarks and toolkits for training and evaluating deepfake detection models. This framework provides standardized datasets and evaluation metrics that help researchers compare different approaches to media tampering detection. Its comprehensive design makes it valuable for both academic research and commercial development.

The DeepFake Detection Challenge (DFDC), originally organized by Facebook and AWS, continues to influence the field with its extensive dataset and evaluation framework. The challenge spurred significant advances in image and video forensics, with many of the resulting tools now available as open-source resources. Researchers frequently use DFDC datasets as a starting point for developing new detection methods.

Google’s DeepFake Detection dataset provides another valuable resource for training and evaluating detection models. This large, diverse collection includes thousands of manipulated videos created using various techniques, allowing researchers to test their algorithms against a wide range of synthetic media types. The dataset is particularly useful for developing generalized detection approaches that work across different manipulation methods.

Browser Extensions and Consumer Tools

As deepfakes increasingly threaten everyday internet users, several accessible tools have emerged to help individuals verify content they encounter online.

Browser extensions like “Deepfake Detector” and “Reality Defender” integrate directly with web browsers to flag potentially manipulated media in real-time. These tools provide convenient information verification tools for users browsing social media or news sites, offering immediate alerts about suspicious content without requiring technical expertise.

Mobile applications have also entered the market, providing on-the-go deepfake detection capabilities. Apps like “Fake Video Detection” and “DeepWare Scanner” allow users to analyze videos directly from their smartphones, making detection technology accessible to the general public. These tools typically employ simplified versions of more complex detection algorithms, balancing accuracy with user-friendly interfaces.

Many of these consumer tools also include educational components that help users understand the warning signs of manipulated media, further enhancing public awareness about phoney media detection. While generally less powerful than enterprise solutions, these accessible tools play a crucial role in democratizing access to fake video identification capabilities.

Audio Deepfake Detection Approaches

As audio deepfakes become increasingly prevalent, specialized detection techniques have evolved to identify synthetic speech and manipulated recordings. These audio deepfake detection methods focus on analyzing voice patterns and acoustic properties that distinguish authentic from synthetic audio.

Voice pattern analysis represents the foundation of audio authentication. Advanced AI models analyze speech for natural variations in pitch, tone, rhythm, and emotional inflection that are difficult to perfectly replicate. These systems create voiceprints of known individuals and compare new recordings against these established patterns to identify potential forgeries.

Frequency anomaly detection provides another powerful approach. Digital audio forgery detection tools examine spectrograms and frequency distributions to identify unnatural patterns or artifacts introduced during the synthesis process. Even sophisticated AI voice models often leave subtle frequency signatures that detection algorithms can recognize.

Specialized AI models for distinguishing synthetic speech have made significant progress in recent years. These systems train on massive datasets of both authentic and manipulated audio to identify the characteristic markers of artificially generated voices. According to Spiralytics, these models can now detect audio deepfakes with accuracy rates exceeding 85% for many common manipulation techniques.

Real-time audio authentication tools have also emerged for live verification scenarios. These systems analyze streaming audio during phone calls, video conferences, or broadcast media to identify potential manipulation as it occurs. Such tools are particularly valuable for financial institutions and security services where audio verification plays a critical role in identity confirmation.

Emerging Technologies in Deepfake Detection

The field of deepfake detection continues to evolve rapidly, with several emerging technologies showing particular promise for the future of media authenticity assurance.

Blockchain-based content verification systems represent one of the most innovative approaches. By creating immutable records of media provenance on distributed ledgers, these systems establish trusted verification chains that can confirm content authenticity. Each piece of media receives a unique cryptographic signature at creation, allowing viewers to verify its origins and whether it has been altered since initial recording.

Quantum computing applications, though still in early stages, show potential for revolutionizing AI-based media authentication. Quantum algorithms could dramatically accelerate the processing capabilities of detection systems, allowing for more comprehensive analysis in less time. This emerging field may eventually enable real-time detection of even the most sophisticated deepfakes.

Multimodal detection approaches represent the current cutting edge in synthetic media detection techniques. These systems analyze multiple aspects of content simultaneously—examining visual elements, audio components, and metadata in combination. According to recent research, multimodal systems demonstrate significantly higher accuracy rates than single-mode detection methods, particularly against sophisticated deepfakes that might fool simpler systems.

This integration of multiple detection methodologies represents the most promising direction for future development, as it creates redundant verification layers that are more difficult to circumvent. As deepfake creators continue to refine their techniques, these multi-layered approaches will become increasingly essential for reliable detection.

Industry Applications and Use Cases

Media and Journalism

News organizations face unprecedented challenges in verifying the authenticity of digital content. Media outlets increasingly implement sophisticated verification workflows to maintain credibility in an era of rampant misinformation. These processes typically incorporate multiple fake news detection tools and human verification checkpoints before content publication.

Pre-publication authentication has become standard practice at major news organizations, with dedicated teams using specialized media credibility verification systems to analyze submitted photos and videos. These teams employ both automated tools and manual inspection techniques to identify potential manipulations before content reaches audiences.

Real-time fact-checking integration represents another important advancement. During breaking news coverage, verification systems work alongside journalists to rapidly assess incoming media for signs of manipulation. This approach helps prevent the inadvertent spread of deepfakes during critical news events when verification time is limited.

Legal and Forensic Applications

The legal system increasingly contends with digital evidence that may have been manipulated. Establishing evidentiary standards for digital media has become a critical concern for courts worldwide, with specific protocols emerging for video manipulation identification and authentication.

Court-admissible verification techniques now include rigorous image manipulation detection processes that can withstand legal scrutiny. Forensic experts employ specialized tools to analyze digital media evidence, creating detailed reports that document their authentication methodology and findings.

Digital forensics has become central to many investigations where media evidence plays a key role. Law enforcement agencies increasingly rely on image and video credibility analysis to establish facts in cases involving digital evidence. The ability to conclusively verify or debunk digital media can significantly impact case outcomes in today’s digital-evidence-heavy legal environment.

Social Media Platform Approaches

Major social platforms have implemented various measures to combat the spread of deepfakes. Facebook, Twitter, and YouTube employ content moderation algorithms trained to identify suspicious content detection markers in uploaded media. These systems flag potentially manipulated content for human review or apply warning labels to questionable posts.

Platform-specific policies regarding fraudulent media identification vary in their implementation and effectiveness. Some platforms remove confirmed deepfakes entirely, while others focus on labeling content to provide context. These approaches continue to evolve as platforms balance free expression concerns with the need to prevent harmful misinformation.

User reporting mechanisms serve as an important supplementary layer of protection. Most platforms now include specific options for reporting potentially manipulated media, allowing users to flag suspicious content for review. These crowdsourced identification systems help platforms identify problematic content that automated systems might miss.

Challenges in Deepfake Detection

Despite significant advances, deepfake detection faces several persistent challenges that complicate effective identification of synthetic media.

The fundamental challenge lies in the ongoing cat-and-mouse game between creators and detectors. As detection technology improves, deepfake creation methods evolve to circumvent these defenses. Current detection rates hover around 65% against the most sophisticated deepfakes, highlighting the significant gap that remains in video credibility assessment capabilities.

Technical limitations further constrain detection effectiveness. Current technologies struggle with highly realistic deepfakes, particularly those created using advanced generative adversarial networks (GANs). These sophisticated fakes often bypass conventional detection methods, necessitating continuous refinement of media credibility verification techniques.

False positives present another significant concern. Detection systems sometimes misidentify genuine content as fake, potentially undermining trust in authentic media. This reliability issue complicates deployment in high-stakes environments where accuracy is paramount.

Resource requirements present practical implementation barriers. Advanced detection systems often demand substantial computational resources, limiting widespread deployment. This accessibility gap means that while enterprise organizations may have robust protection, smaller entities and individuals often lack access to the most effective detection tools.

Future Directions in Deepfake Detection

Several promising research areas are shaping the future of artificial intelligence for deepfake detection. Multimodal analysis approaches that combine visual, audio, and metadata examination show particular potential for improving detection accuracy against sophisticated fakes. These integrated approaches create more comprehensive detection frameworks that are harder to circumvent.

Proactive watermarking and content authentication systems represent another promising direction. Rather than solely detecting manipulations after the fact, these approaches embed verifiable markers in authentic content at creation. Digital signatures and invisible watermarks provide verification mechanisms that can establish content provenance and identify unauthorized alterations.

Industry standardization efforts aim to create common benchmarks and certification processes for detection technologies. These initiatives seek to establish shared evaluation criteria and performance standards, enabling more effective comparison between different detection approaches and fostering innovation in visual content forgery detection.

Public awareness and education initiatives represent a crucial complementary strategy. Technical solutions alone cannot solve the deepfake challenge; informed users who understand how to identify potential manipulation play a vital role in mitigating risks. Educational programs that teach critical media literacy skills help create a more resilient public better equipped to navigate an increasingly synthetic media landscape.

Practical Guidelines for Verifying Suspicious Media

For individuals concerned about media authenticity, following a systematic verification process can help identify potential deepfakes:

- Check the source: Verify media comes from reputable sources with established credibility

- Cross-reference: Search for the same content across multiple sources to identify inconsistencies

- Examine metadata: Use tools like AI analysis tools to check file creation data and editing history

- Look for visual clues: Pay attention to unnatural facial movements, lighting inconsistencies, or blurring

- Use verification tools: Employ accessible deepfake detection software to analyze suspicious content

Several red flags should trigger heightened scrutiny when reviewing digital content. Unnatural facial movements—particularly around the eyes and mouth—often indicate manipulation. Poor synchronization between audio and lip movements represents another common indicator of fake video identification. Unusual lighting effects, particularly inconsistent shadows or reflections, frequently appear in manipulated media.

For non-technical users, several accessible tools simplify the verification process. Browser extensions like Reality Defender provide one-click analysis of online media, while mobile apps offer on-the-go verification capabilities. These tools democratize access to phoney media detection capabilities, empowering users without technical expertise to evaluate content authenticity.

When suspicious content is identified, reporting it promptly helps prevent further spread. Most social platforms offer specific mechanisms for reporting potentially manipulated media. These reports trigger review processes that can lead to content removal or labeling, helping protect others from misinformation.

As deepfake technology continues its rapid evolution, staying informed about the latest detection methods and maintaining a healthy skepticism toward sensational or unusual media becomes increasingly important. By combining technical tools with critical thinking skills, individuals can better navigate the complex landscape of authentic and synthetic media in 2024 and beyond.

Trending AI Listings on Jasify

- Short-Form Video Clipping Service (AI-Powered & Fully Edited) – Useful for creating effective short clips that explain deepfake detection techniques from longer educational videos.

- Thumbnail & Banner Pack – YouTube, Podcast & Brand Visuals – Perfect for creating engaging thumbnails for deepfake awareness and educational content.

- High-Impact SEO Blog – 1000+ Words (AI-Powered & Rank-Ready) – Helps create SEO-optimized content about deepfake detection to raise awareness and educate audiences.