The world of video technology is experiencing a remarkable transformation thanks to artificial intelligence. At the forefront of this revolution is AI video upscaling, a sophisticated process that elevates low-resolution footage to stunning clarity using advanced machine learning algorithms. Unlike traditional enhancement methods, AI-powered solutions can intelligently reconstruct missing details, reduce artifacts, and produce visually appealing results that were previously impossible to achieve.

As streaming platforms, film studios, and even everyday content creators demand higher quality video, AI video upscaling has emerged as a game-changing technology. This article explores how machine learning is revolutionizing video enhancement technology and opening new possibilities across multiple industries.

Understanding AI Video Upscaling

AI video upscaling is a process that enhances the resolution of digital videos using advanced artificial intelligence algorithms to generate higher-resolution images with improved detail rather than merely stretching pixels. This technology represents a fundamental shift in how we approach video quality improvement.

Traditional upscaling methods rely on basic interpolation algorithms such as bicubic or nearest neighbor approaches. These conventional techniques estimate new pixel values based on surrounding pixels, often resulting in blurry images and noticeable visual artifacts. The outcome frequently looks stretched or pixelated, with important details lost in the process.

In contrast, AI-powered video enhancement leverages neural networks trained on paired low- and high-resolution images to intelligently reconstruct missing details. These sophisticated systems can reduce artifacts while producing sharper edges and more natural textures, resulting in a significantly improved viewing experience. According to Boris FX, this approach allows for much more realistic results compared to traditional methods.

The core problem that AI video upscaling solves is improving the visual quality of low-resolution footage by predicting and filling in missing pixel information. This technology excels at enhancing detail, reducing noise and compression artifacts, and maintaining temporal consistency across video frames—something particularly challenging in video processing.

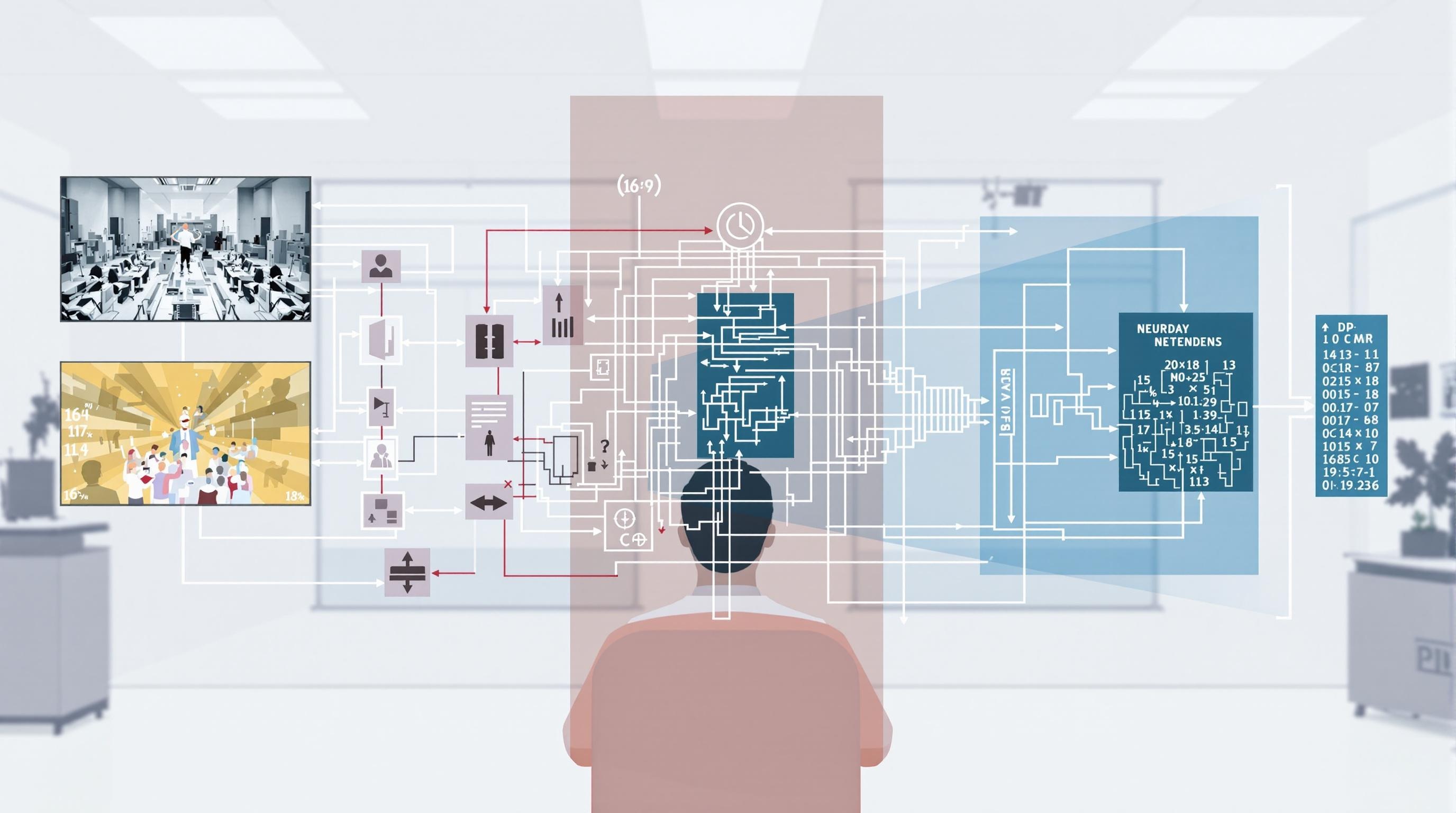

How Neural Networks Learn to Reconstruct Missing Pixel Information

Neural networks are the backbone of modern AI video upscaling. These complex systems learn to recognize patterns in video data and use that knowledge to intelligently predict what higher-resolution details should look like. During training, these networks analyze thousands of examples of low-resolution and high-resolution image pairs, learning the relationship between them.

When presented with new low-resolution footage, the neural network applies its learned patterns to reconstruct missing details. What makes this approach revolutionary is that the AI doesn’t simply apply a formula—it makes context-aware decisions about how to enhance each pixel based on surrounding information and learned patterns of what realistic video content looks like.

The Technology Behind AI Video Upscaling

Deep learning architectures form the foundation of modern video enhancement technology. These sophisticated systems employ various neural network architectures to transform low-quality video into higher-resolution outputs with remarkable fidelity.

The most prominent machine learning models used in video upscaling include:

- Convolutional Neural Networks (CNNs): These specialized networks excel at processing visual data by analyzing patterns and spatial relationships within frames.

- Generative Adversarial Networks (GANs): This innovative approach pits two neural networks against each other—one generating enhanced frames while the other evaluates their realism—resulting in increasingly convincing outputs.

- Transformer Models: Originally developed for natural language processing, transformers have recently been adapted for video processing, offering impressive results for complex scene reconstruction.

Super resolution techniques enable significant quality improvements by intelligently mapping low-resolution inputs to higher-resolution outputs. These methods can effectively quadruple the resolution of source material while maintaining natural-looking results. As Nvidia explains, these technologies are becoming increasingly accessible through consumer hardware like their RTX GPUs.

The role of training data cannot be overstated in developing effective video upscaling algorithms. Neural networks require massive datasets of paired low- and high-resolution images to learn how to reconstruct details accurately. Datasets like DIV2K and carefully curated frame pairs from high-quality video sources serve as the foundation for teaching AI systems to differentiate between genuine details and artifacts.

Neural Network Training Process

The training process for video enhancement neural networks is computationally intensive and methodologically sophisticated. It begins with collecting paired datasets of low-resolution and high-resolution video frames, often augmented through techniques like cropping and flipping to increase diversity.

During training, the networks learn to minimize various loss functions—mathematical measurements of how far the AI’s output deviates from the ideal high-resolution reference. Common approaches include mean squared error and more advanced perceptual losses that better align with human visual perception. According to HitPaw, this training process requires substantial computational resources, including high-end GPUs or specialized hardware accelerators.

Teaching AI to distinguish between noise and detail is particularly challenging. The neural networks must learn to suppress compression artifacts, film grain, and digital noise while preserving and enhancing genuine details like textures and edges. This delicate balance is achieved through carefully designed loss functions and extensive training with diverse video content.

Industry benchmarks for measuring upscaling quality include objective metrics like PSNR (Peak Signal-to-Noise Ratio) and SSIM (Structural Similarity Index), alongside subjective visual assessments that evaluate naturalness, sharpness, and the presence of artifacts.

Real-World Applications of AI Video Upscaling

The practical applications of AI video upscaling extend across numerous industries, transforming how we preserve, consume, and interact with video content.

Film restoration and preservation have been revolutionized by this technology. Historical footage, once limited by the recording technology of its era, can now be enhanced to reveal details previously invisible to viewers. Studios and archives are using video enhancement technology to breathe new life into classic films, documentaries, and historically significant recordings.

In broadcast media, AI upscaling is enabling the upgrade of standard definition content to HD and 4K resolutions for modern streaming platforms. This allows media companies to leverage their extensive content libraries for today’s high-resolution displays without expensive re-shoots or remasters.

The gaming industry has embraced video resolution enhancement to improve graphics without requiring hardware upgrades. Technologies like DLSS (Deep Learning Super Sampling) allow games to render at lower resolutions internally and then use AI to upscale the image to higher resolutions in real-time, delivering better performance without sacrificing visual quality.

Security and surveillance systems benefit tremendously from video quality improvement software. Low-resolution security camera footage can now be enhanced to reveal crucial details for identification purposes, potentially aiding law enforcement and security professionals in their investigations.

Mobile video enhancement has become increasingly important as consumers view more content on smartphones and tablets. AI-powered upscaling can optimize videos for different screen sizes and resolutions, ensuring optimal viewing experiences regardless of the device.

Leading AI Video Enhancement Software and Tools

The market for video enhancement tools has expanded rapidly in recent years, with solutions ranging from professional-grade software to accessible consumer applications.

Commercial solutions like Topaz Video Enhance AI and DVDFab Enlarger AI represent the cutting edge of consumer-available upscaling technology. These applications leverage sophisticated neural networks to deliver remarkable quality improvements for various source materials. They offer intuitive interfaces that allow users to enhance their videos with minimal technical expertise, though processing times can be substantial depending on hardware capabilities.

Open-source alternatives provide budget-conscious options for video quality improvement. While these may lack some of the refinement of commercial tools, they offer surprising capabilities for enthusiasts willing to navigate a steeper learning curve.

Cloud-based video enhancement services are emerging as powerful options for those lacking high-end hardware. These platforms offload the intensive processing to remote servers, allowing users to enhance videos without investing in expensive GPUs. As TheMexpert notes, these services make advanced video processing accessible to a broader audience.

Mobile applications for on-the-go video enhancement have also appeared in app stores, bringing simplified versions of this technology to smartphones. While these can’t match the quality of desktop solutions, they offer convenient options for quick enhancements of mobile-captured footage.

Comparison of Popular Video Upscalers

When evaluating video enhancement software, several factors differentiate the available options:

- Feature sets: Higher-end tools offer more granular control over the upscaling process, including noise reduction, resolution targets, and batch processing capabilities.

- Performance benchmarks: Processing speed varies dramatically between solutions, with some prioritizing quality over speed and others focusing on faster results with moderate improvements.

- Pricing models: Options range from subscription-based services to one-time purchases and free open-source alternatives.

- User interface: Some tools cater to professional video editors with complex options, while others focus on simplicity for casual users.

The choice between video upscalers ultimately depends on the specific requirements of the project, available hardware, budget constraints, and the technical expertise of the user.

Technical Challenges in Video Upscaling

Despite impressive advances, AI video upscaling faces several significant technical hurdles that researchers and developers continue to address.

Handling motion and temporal consistency between frames presents a major challenge. Unlike image upscaling, video enhancement must ensure that details remain consistent across consecutive frames to avoid flickering or ghosting artifacts. Video enhancement algorithms must consider the temporal dimension, making the problem substantially more complex than static image enhancement.

Balancing detail enhancement with artifact suppression requires careful algorithm design. Overly aggressive enhancement can introduce unnatural textures or exaggerate noise, while conservative approaches might fail to recover important details. This delicate balance is particularly challenging with heavily compressed source material.

Video compression artifacts present in most digital video complicate the upscaling process. Block artifacts, color banding, and motion compensation errors in the source material can be misinterpreted by AI systems as genuine details, potentially resulting in enhanced versions of these defects rather than their removal.

Computational efficiency remains a significant consideration, especially for real-time applications. The most sophisticated models require substantial processing power, limiting their use in streaming or gaming scenarios where immediate results are necessary.

Video interpolation for frame rate enhancement adds another layer of complexity, as the AI must not only improve spatial resolution but also synthesize entirely new frames to increase temporal resolution. This requires predicting motion vectors and generating intermediate frames that maintain visual coherence.

Latest Advancements in AI Video Enhancement Technology

The field of video enhancement is evolving rapidly, with several exciting developments pushing the boundaries of what’s possible.

Real-time upscaling capabilities have emerged in modern systems, particularly through hardware acceleration on specialized GPUs and neural processing units. This has enabled applications like live broadcast upscaling and in-game resolution enhancement that were previously impossible due to processing constraints.

Multi-frame super resolution techniques represent a significant advancement by aggregating information across multiple frames. By analyzing several consecutive frames, these systems can extract more detail than would be possible from a single frame, resulting in more accurate reconstructions with better temporal consistency.

Perceptual loss functions have improved visual quality by aligning AI output with human perception rather than simply minimizing pixel-wise differences. These sophisticated approaches train networks to prioritize the aspects of image quality that humans find most important, resulting in more visually pleasing results even when objective metrics might suggest otherwise.

Hardware acceleration advancements, particularly through specialized GPU architectures and neural processing units, have dramatically reduced processing times. Consumer-level GPUs now include dedicated tensor cores that accelerate AI operations, making sophisticated video enhancement accessible to enthusiasts and professionals alike.

Comprehensive video restoration pipelines now combine multiple enhancement techniques—including upscaling, denoising, deblurring, and color correction—into unified workflows. These integrated approaches address multiple aspects of video quality simultaneously, resulting in more comprehensive improvements than upscaling alone could achieve.

Practical Implementation Guide

For those looking to implement AI video upscaling in their projects, several practical considerations can help achieve optimal results.

Workflow recommendations vary depending on the specific video enhancement scenario. For archival film restoration, a careful multi-pass approach might be appropriate, while quick enhancements for social media might prioritize speed over maximum quality. Understanding the end-use case is crucial for selecting the right tools and settings.

Hardware requirements for optimal video enhancement performance include multi-core CPUs and high-end GPUs with ample VRAM. While some processing can occur on standard hardware, dedicated graphics cards with AI acceleration capabilities like NVIDIA’s RTX series dramatically improve processing times and enable more sophisticated models.

To achieve the best results with different source materials, consider these tips:

- Start with the highest quality source available—upscaling cannot recover details that were never captured

- For compressed footage, consider using dedicated artifact removal before upscaling

- Test different AI models, as some perform better on specific types of content (film, animation, digital video)

- Preserve a copy of the original footage before processing

- For lengthy projects, process a short sample first to verify settings

Common pitfalls to avoid include over-processing that introduces unnatural textures, unrealistic expectations about what can be recovered from heavily degraded footage, and excessively long processing times that may not yield proportionally better results. Balancing processing time with quality outcomes requires experimentation and understanding the diminishing returns of more intensive processing.

Future of AI Video Upscaling

The trajectory of video enhancement technology points toward continued innovation and broader accessibility.

Emerging trends in video enhancement techniques include more efficient neural network architectures that reduce computational requirements while maintaining or improving quality. Research into self-supervised and weakly-supervised learning may reduce the dependency on paired training data, potentially allowing models to learn from unpaired examples.

Potential breakthroughs in video quality improvement may come from new approaches to temporal modeling that better capture motion dynamics and scene understanding. As neural networks develop improved “comprehension” of video content beyond pixel patterns, they may make more intelligent decisions about enhancement.

Integration with other video processing technologies is creating comprehensive media enhancement pipelines. Future systems will likely combine upscaling with automated colorization, frame rate conversion, audio enhancement, and even content-aware editing to create end-to-end solutions for media transformation.

The democratization of high-quality video enhancement tools continues as the technology becomes more accessible through cloud platforms and consumer software. What once required specialized knowledge and equipment is increasingly available to content creators at all levels, empowering new creative possibilities.

Industry adoption is accelerating across entertainment, security, healthcare, and education sectors. As Project Aeon observes, the demand for higher-quality video experiences is driving investment and innovation in this technology, suggesting a bright future for AI video enhancement.

Conclusion

The AI video upscaling revolution represents a fundamental shift in how we approach video quality. Through sophisticated machine learning algorithms, neural networks, and deep learning techniques, previously impossible enhancements have become reality. From breathing new life into historical footage to enabling real-time improvements in modern content, AI-powered video enhancement technology continues to transform our visual experiences.

As hardware capabilities advance and algorithms become more sophisticated, we can expect even more impressive results from video enhancement systems. The future promises not only better quality but also greater accessibility, allowing more creators to leverage these powerful tools.

For those interested in exploring AI-powered media tools further, Jasify’s AI tools marketplace offers a curated selection of cutting-edge solutions for content creators and professionals looking to enhance their video production workflows.

Trending AI Listings on Jasify

- Short-Form Viral Clips (AI-Powered & Fully Edited) – Perfect for transforming your upscaled video content into viral-ready clips for social media platforms.

- Custom 24/7 AI Worker – Automate Your Business with a Personalized GPT System – Helps automate video processing workflows and enhance your content production pipeline.

- High-Impact SEO Blog – 1000+ Words (AI-Powered & Rank-Ready) – Create content that explains your enhanced videos and builds authority in the video production space.